25 Years Later: A Brief Analysis of GPU Processing Efficiency

The first 3D graphics cards appeared 25 years ago and since then their power and complexity have grown at a scale greater than any other microchip found

The first 3D graphics cards appeared 25 years ago and since then their power and complexity have grown at a scale greater than any other microchip found in a PC. In going from one million to billions of transistors, smaller dies, and consuming more power, the capabilities of these behemoths is immeasurably greater, but what can we learn about efficiency?

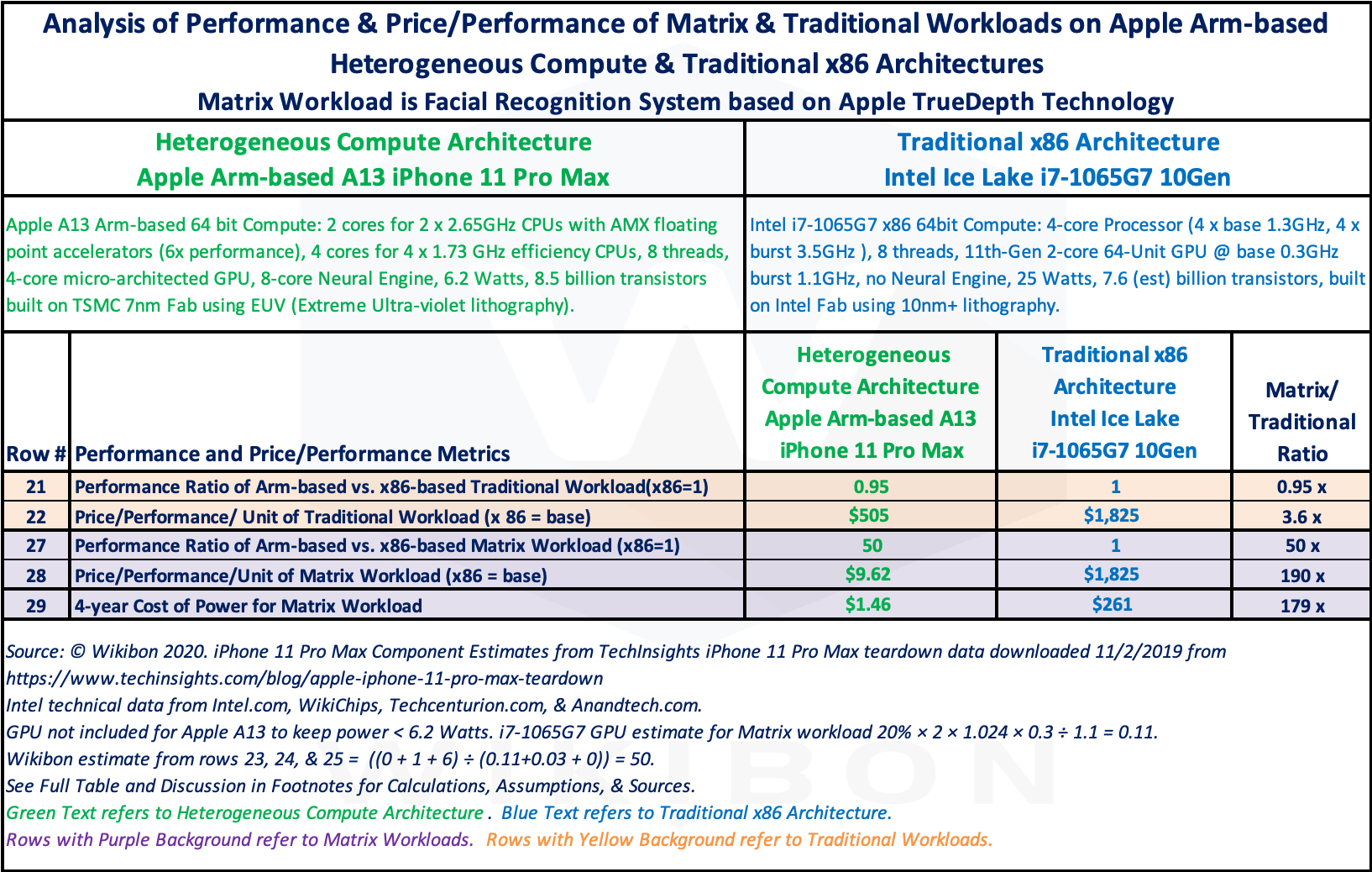

Arm Yourself: Heterogeneous Compute Ushers in 150x Higher Performance - Wikibon Research

NVIDIA GeForce RTX 4090 24GB Content Creation Review

Looking Beyond TOPS/W: How To Really Compare NPU Performance

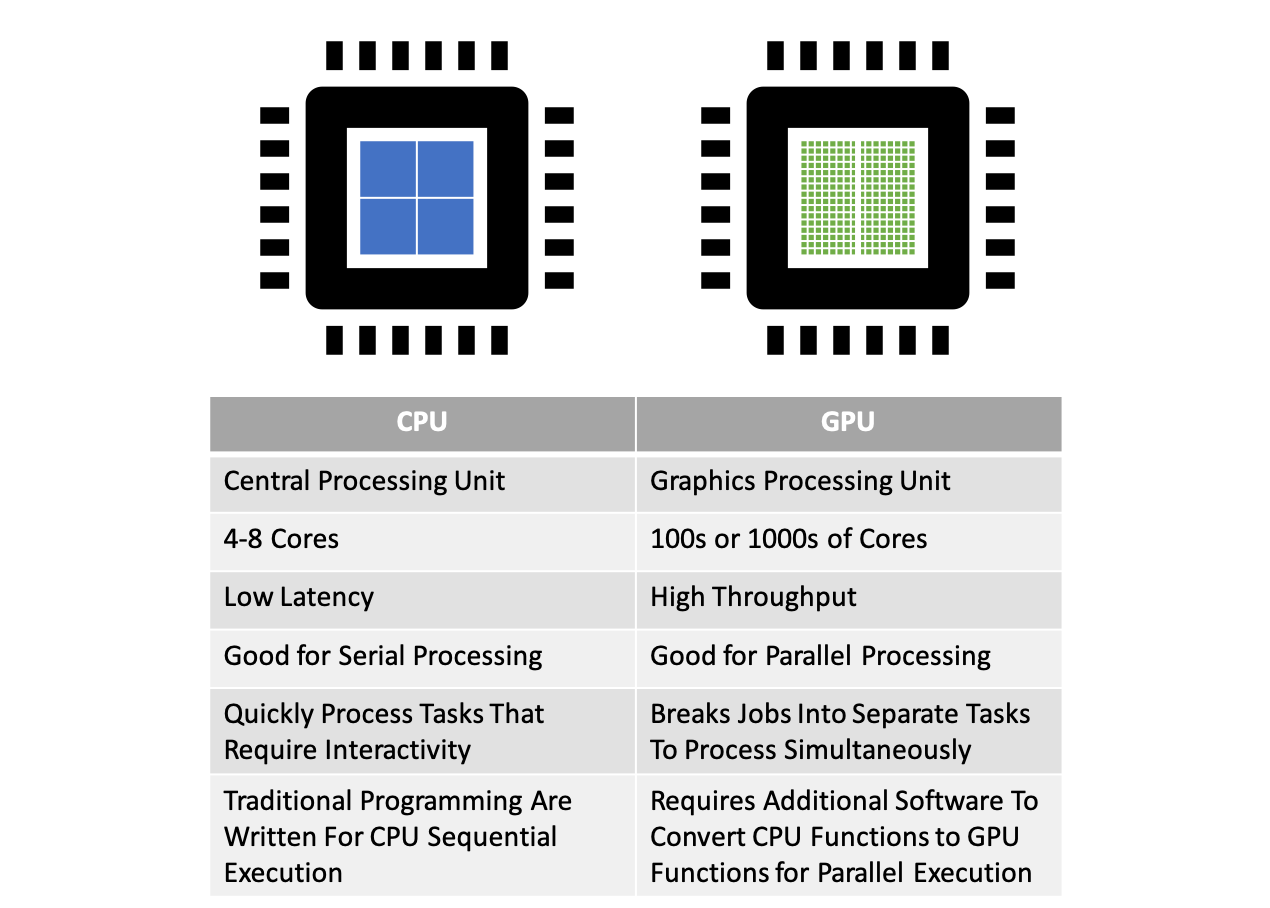

CUDA is a computing architecture designed to facilitate the development of parallel programs. In conjunction with a comprehensive software platform, the CUDA Architecture enables programmers to draw on the immense power of graphics processing units (GPUs) when building high-performance applications. GPUs, of course, have long been available for demanding graphics and game applications.

CUDA by Example: An Introduction to General-purpose GPU Programming [Book]

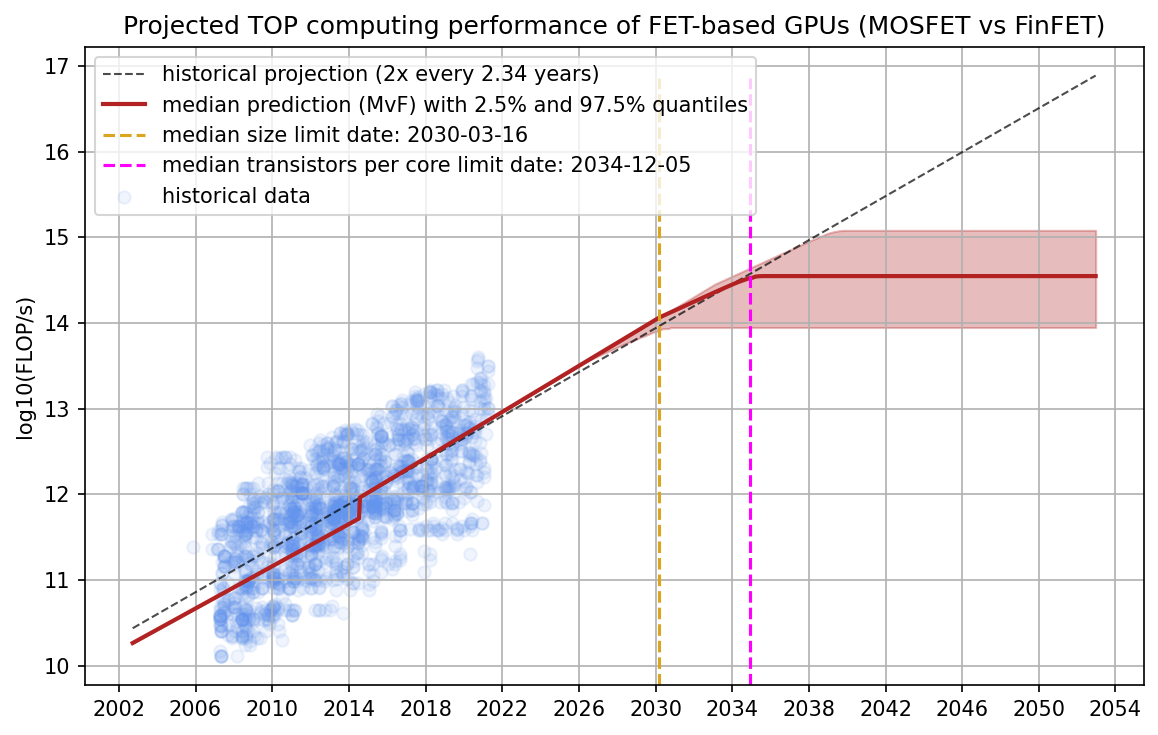

Predicting GPU Performance – Epoch

Performance Analysis of CP2K Code for Ab Initio Molecular Dynamics on CPUs and GPUs

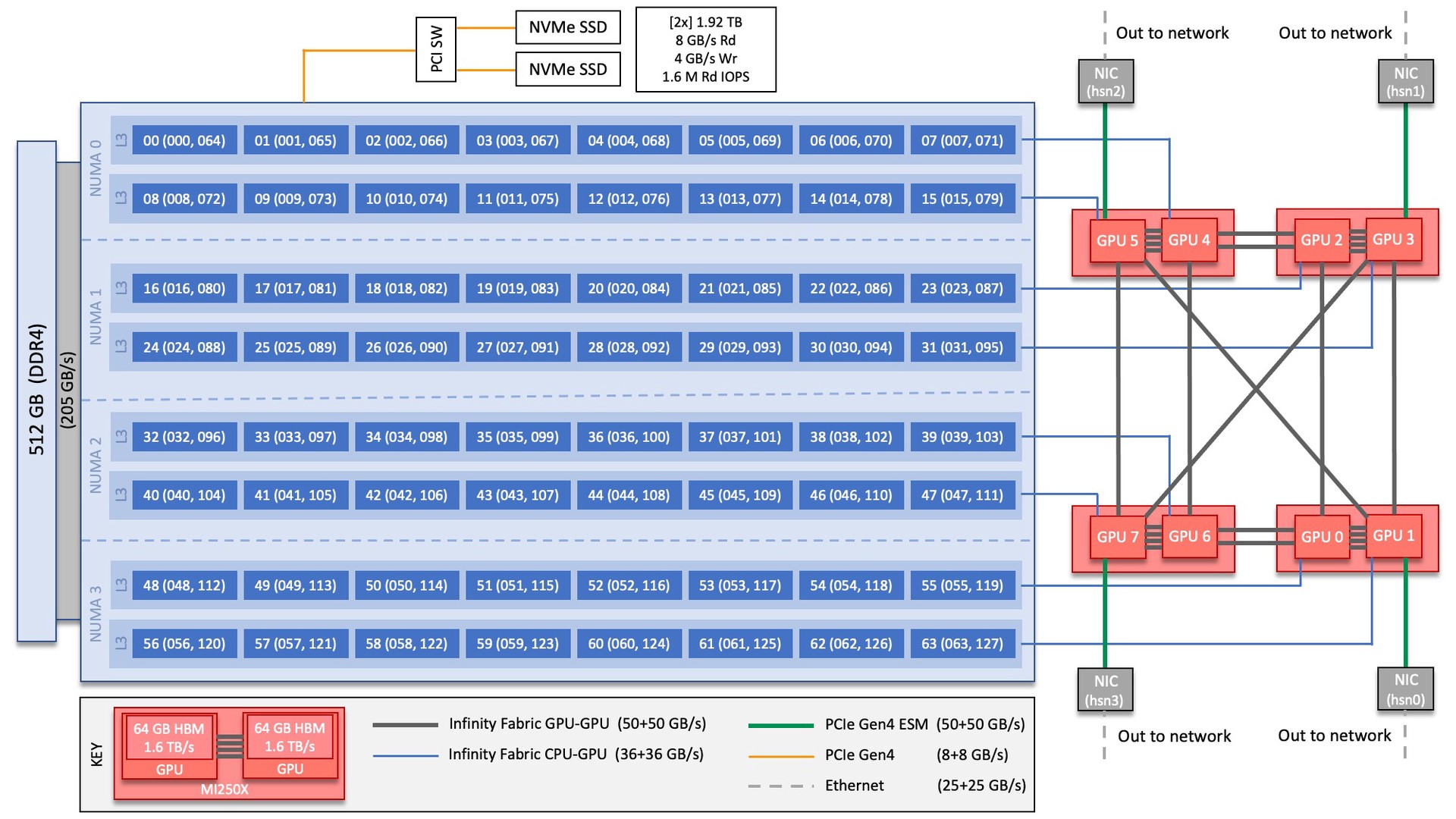

Crusher Quick-Start Guide — OLCF User Documentation

25 Years Later: A Brief Analysis of GPU Processing Efficiency

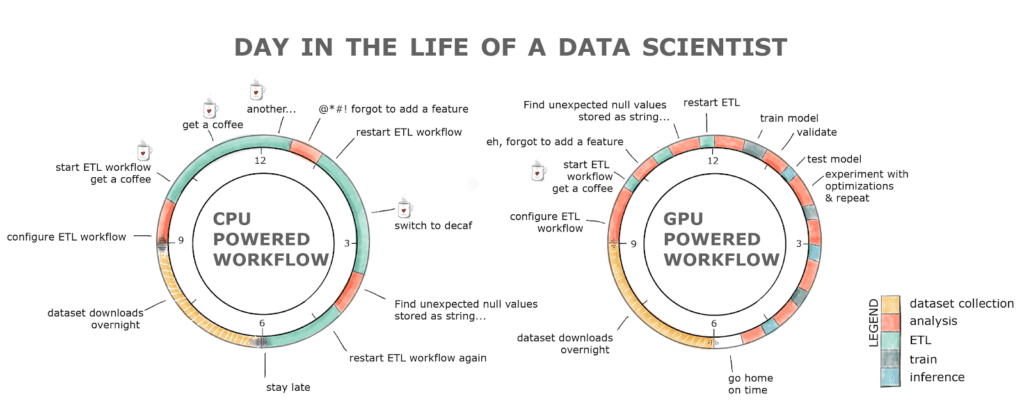

Parallel Computing — Upgrade Your Data Science with GPU Computing, by Kevin C Lee

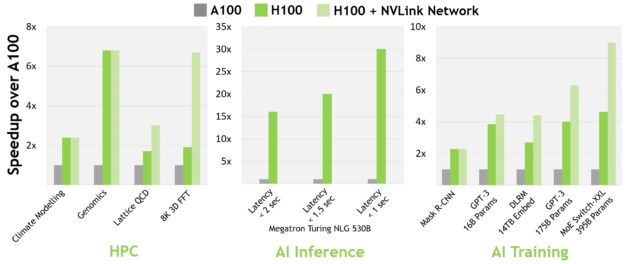

NVIDIA's 80-billion transistor H100 GPU and new Hopper Architecture will drive the world's AI Infrastructure

Is the Nvidia A100 GPU Performance Worth a Hardware Upgrade?

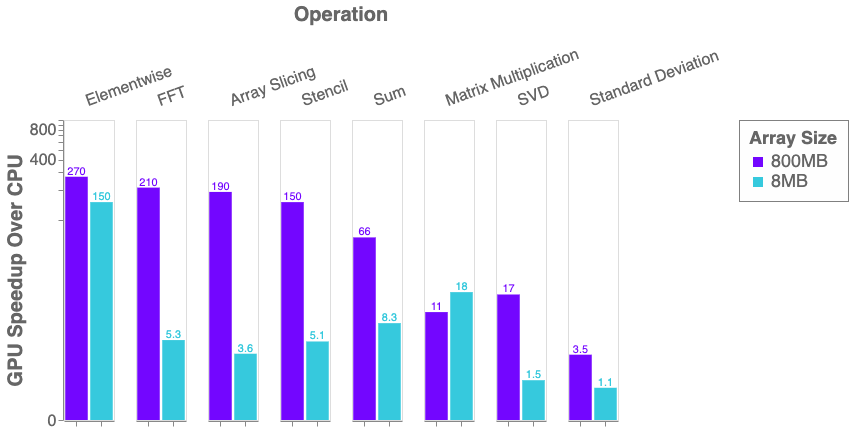

Python, Performance, and GPUs. A status update for using GPU…, by Matthew Rocklin

NVIDIA Hopper Architecture In-Depth

Mastering GPUs: A Beginner's Guide to GPU-Accelerated DataFrames in Python - KDnuggets